When your clients complete forms quickly, everything else moves faster—onboarding, reviews,...

Over the past several years, we’ve written extensively about why process design and workflow automation are foundational for modern financial services firms.

In posts like Planning for Success with Process Design and Establishing a Digital‑First Practice, we made a consistent case: automation doesn’t start with tools; it starts with clarity. Controls, accountability, and auditability must be designed into workflows before technology is layered on.

As interest shifts rapidly from automation to AI, we’re now seeing a familiar pattern repeat itself. Organizations are asking how to introduce AI into workflows that are still fragmented, exception‑heavy, and governed informally. The risk is not theoretical. When workflows are immature, AI does not fix them; it accelerates their failure modes.

AI amplifies your current process design — good and bad — at scale.

This post builds directly on our earlier work. It goes one level deeper, focusing on the missing connective tissue between “workflow automation” and “safe AI adoption”: workflow orchestration. We’ll outline a practical, phased approach that shows how mature workflow design, orchestration, and measurement create the conditions where AI can be introduced safely, and where agentic automation actually makes sense.

Why Broken Workflows and AI Don’t Mix

When workflows are implicit rather than explicit, three problems emerge:

-

Operations teams struggle with fragmented, exception‑heavy processes that rely on tribal knowledge.

-

Management lacks visibility into true cost, risk, and throughput because coordination happens manually across systems.

-

AI magnifies the flaws, producing faster, but not better, outcomes.

AI does not fix ambiguity, missing inputs, unclear policy, or weak governance. It simply magnifies their impact.

The prerequisite for AI is not intelligence. It is structure.

A Phased Approach to Automation

Rather than jumping directly to AI, SideDrawer recommends a phased model that builds confidence, governance, and ROI at each step.

Phase 1: Workflow Maturity Comes First

Before any automation begins, workflows must be made explicit. Every workflow should be expressible as a clear state machine: State → Event → Transition → Action → Evidence. We propose introducing the concept of Evidence to the state machine to capture the institutional memory expected by clients and regulators.

Example of a simple finite-state machine

This is not bureaucracy. It is what makes automation safe. For each workflow, organizations should require a small set of mandatory artifacts:

-

A state diagram

-

Entry and exit criteria for each state

-

A responsible actor for every transition

-

Evidence generated at each transition

-

Regulatory record classification

If a process cannot be described this way, it is not ready for automation, and certainly not ready for AI.

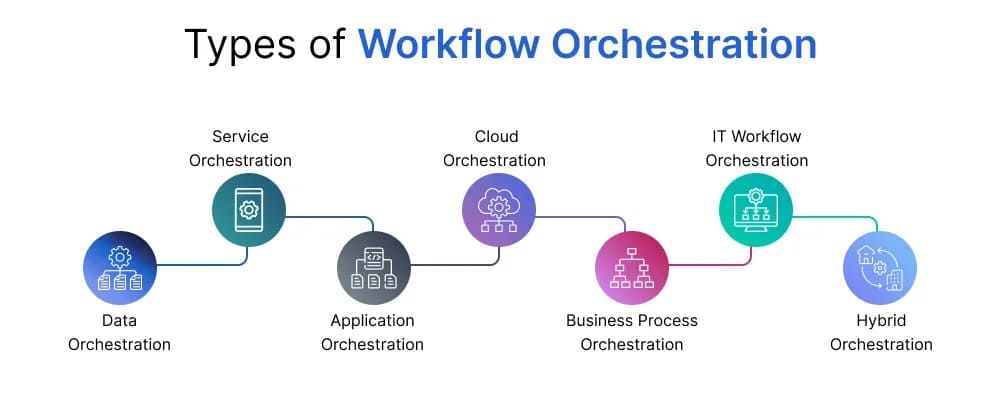

Workflow Orchestration as the Backbone

Once workflows are explicit, orchestration becomes the backbone that makes automation reliable.

At its core, a workflow orchestration platform provides:

-

State management — knowing where every case is at all times

-

Task routing — assigning work to humans or systems

-

Decision gates — enforcing policy and approvals

-

Evidence capture — persisting documents and actions

-

Audit trails — reconstructing any case end‑to‑end

-

SLA tracking — measuring throughput and delay

Just as important is what orchestration must not own.

Orchestration should control:

-

Case lifecycle

-

State transitions

-

Approval checkpoints

-

Escalation paths

-

Timeouts and retries

But it should not own:

-

Business logic (rules engines do this better)

-

Calculations

-

Document interpretation

-

Human judgment

This separation of concerns is foundational for safe scaling.

Phase 2: Baseline Automation (No AI Yet)

With orchestration in place, automation can focus on eliminating coordination friction — not judgment.

These high‑confidence automation targets deliver immediate ROI and generate the data required for future AI use:

-

Case creation

-

Task assignment

-

Reminders and SLAs

-

Approval routing

-

Document storage and tagging

-

Status notifications

From day one, measurement matters. Teams should track:

|

Metric |

Why it matters |

|

Cycle time per state |

Find bottlenecks |

|

Rework/NIGO rate |

Identify quality issues |

|

Exception frequency |

Reveal complexity |

|

Human touches per case |

Cost proxy |

|

Evidence completeness |

Audit readiness |

This stage alone often delivers material efficiency gains, without introducing model risk.

Phase 3: Workflow Improvement Before AI

Before introducing AI, most workflows still have room to improve through data and design.

Bottleneck analysis typically reveals:

-

States where cases consistently stall

-

High variance in completion time

-

Frequent manual escalation

Each exception should then be classified by root cause:

-

Missing input

-

Ambiguous input

-

Unclear policy

-

Tool limitations

-

Human error

The key insight is that many so‑called “AI problems” are actually coordination and design problems. Fixing them upstream reduces risk and dramatically improves downstream automation outcomes.

Phase 4: Where AI Actually Belongs

AI is most effective when applied selectively along an automation spectrum:

A workflow is ready for agentic augmentation only if most of the following are true:

-

It involves interpretation of unstructured inputs

-

Exceptions are more costly than the happy path

-

Humans are mostly explaining, summarizing, or re‑keying

-

There is a clear handoff back to deterministic systems

-

Human accountability is preserved

As a rule of thumb, at least four out of five should be “yes” before advancing.

Phase 5: Introduce AI Safely with “Assist, Then Act”

AI should first be introduced in low‑risk, assisted modes that build trust without altering workflow structure.

Common examples include:

-

Drafting client communications

-

Summarizing case history

-

Flagging missing information

-

Suggesting next actions

Only after these are proven should organizations consider agentic orchestration, where AI can perform limited actions independently.

Even then, guardrails remain critical:

-

Orchestration controls state

-

Rules approve decisions

-

Humans authorize irreversible actions

This is how autonomy is introduced without sacrificing governance.

The SideDrawer Perspective

AI is not a shortcut around operational discipline. It is a multiplier.

Organizations that succeed with AI are the ones that invest first in workflow clarity, orchestration, and measurement. Those that skip these steps often find themselves scaling risk instead of value.

SideDrawer is purpose‑built to help regulated enterprises establish this foundation — turning complex, document‑centric workflows into explicit, governable systems that are ready for safe automation and, eventually, AI. If you are exploring AI initiatives and want to understand whether your workflows are truly ready, that is the conversation to have first.

.png)